Demonstrator: ingest, correlation and performance on systems

Today we want to share with you a demonstrator that we have developed on the Onesait Platform, and that has already served to show our clients some of the capabilities of the Platform.

Specifically, this demonstrator aims to detect, notify and solve problems in multiple remote systems mounted on a topological network (built on a graph entity) with the information given by the raw logs of these systems.

Platform modules used

This demonstrator requires the use of certain modules, such as:

- Dataflow: with a large logical load, it will be in charge of several tasks:

- Actively load logs, normalize them and standardize a common output for them, aiming for them to be homogeneously treatable, regardless of the source system.

- Detect problems in the systems, given their information flow (processed logs). With this detection, alerts on detected problems will be generated.

- Correlate the source of information with the rest of the systems through the topological network.

- Act on the topological network itself, in order to reflect the changes in it, through log events.

- Kafka on Onesait Platform: which will allow, combining it with Dataflow, the possibility of scaling loads and parallelizable treatments, in a simple way. It is also the basis of a productive and robust load system.

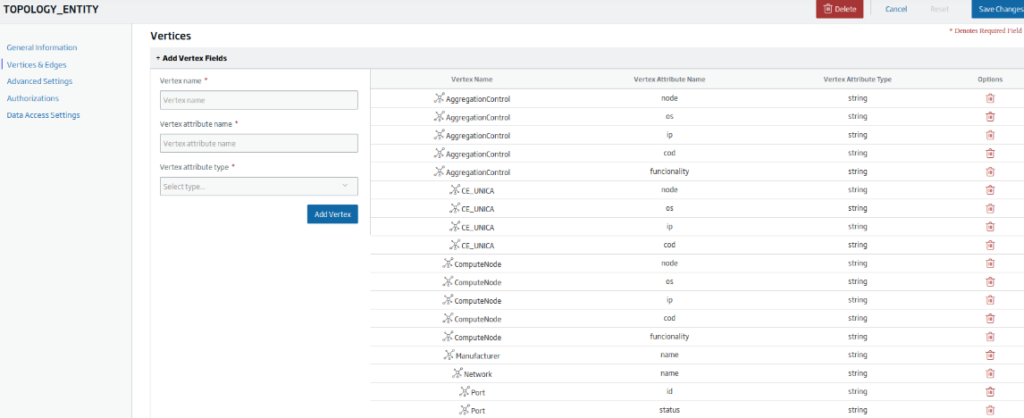

- Graph entities: with the ability to have entities based on graph data (Nebula Graph), a topological system has been modeled as one more entity, having the possibilities of querying (visualization of the network through a graph) or writing on this network (loading and real-time updating of the virtualized network).

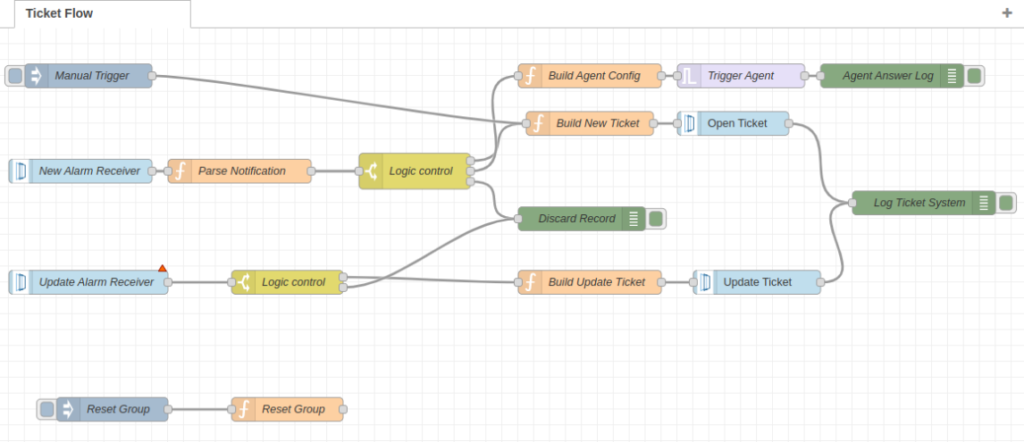

- FlowEngine: which will be in charge of all the management of logical flows, alarms and events, with some functions such as:

- Opening and updating of tickets in third-party systems.

- Release fixes for remote systems.

- Dashboard Engine/Web Projects: where the complete application will be assembled and based on reusable components, both for viewing and managing alarms, logs, topological viewers, correctors, etc.

Flow architecture

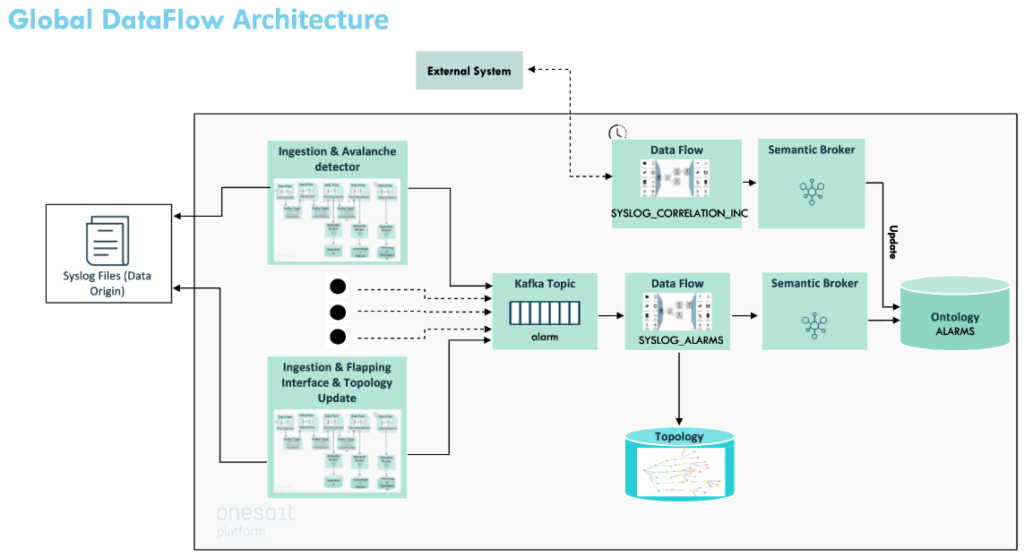

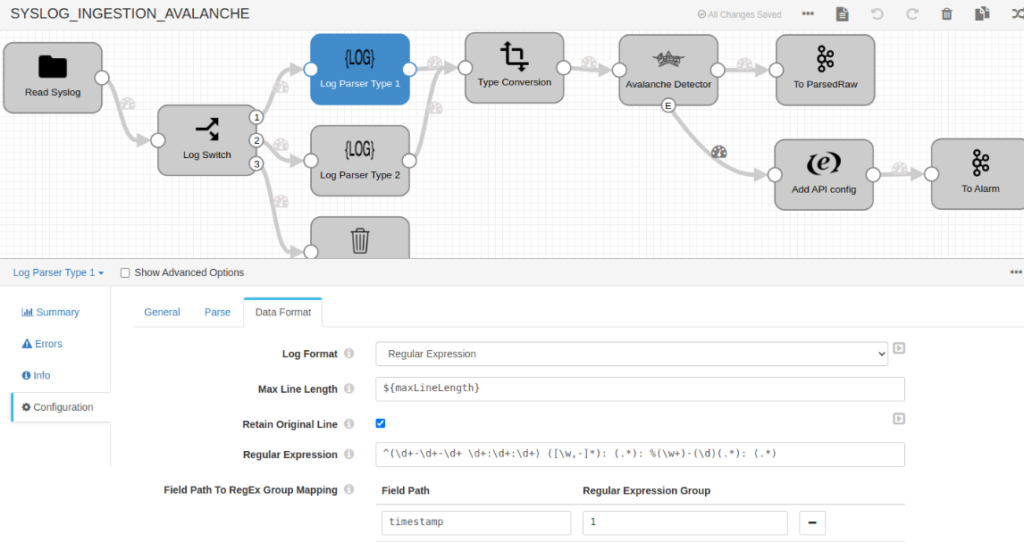

The Dataflow architecture can be seen in the following image. As you can see, there is a common treatment of alarm information, which is supported by the system’s own standardization of raw data and the event detection capacity.

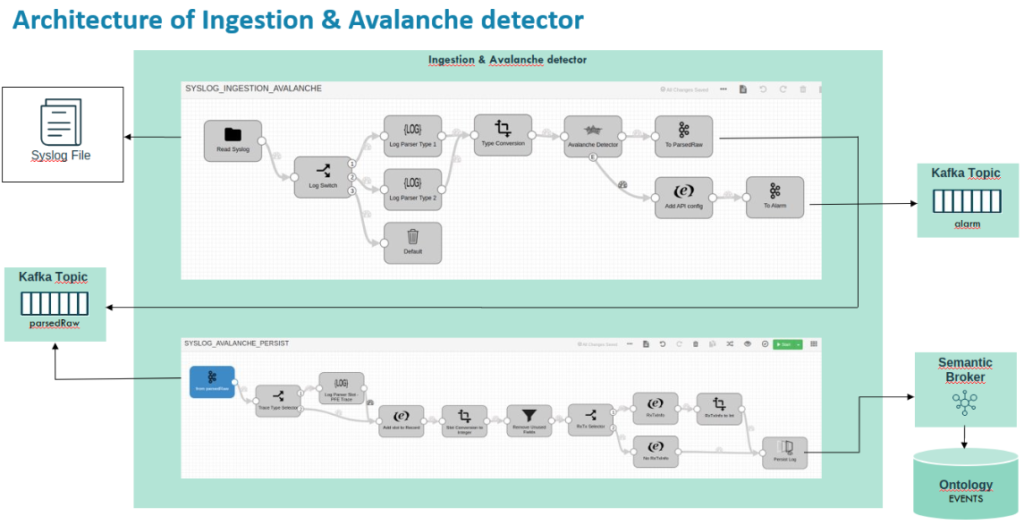

The system logs (the origin seen on the left) go through different information flows that are standardized in the first instance, and apply different problem detectors. For example, say we have the following flow (consisting of two Dataflows communicated via Kafka) which, based on the log ratios, detects excessive load problems in the system and generates alerts.

Focusing on the first of both, you can see the treatment of the logs through different regular expressions that allow you to extract and structure the raw content.

Besides, you can see the incident detector itself that generates alarms that are centralized in an alarm topic for them.

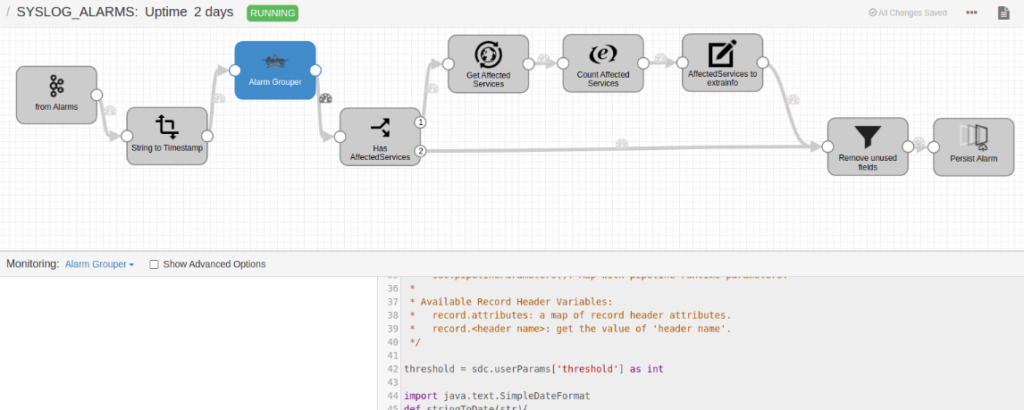

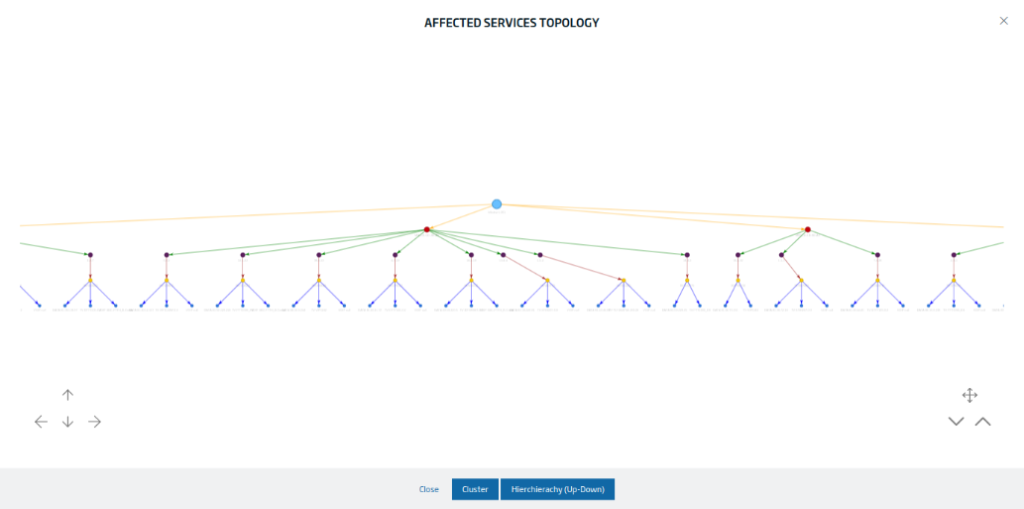

Another important Dataflow is that of alarm treatment, in which, among other things, the different alarms are correlated with the topological network in order to extract the maximum information from the system.

Web Application

Within the web application, there are different tools that allow for the administration and complete visualization of all the elements in the system, using the Web Project tools and the Platform’s Dashboards.

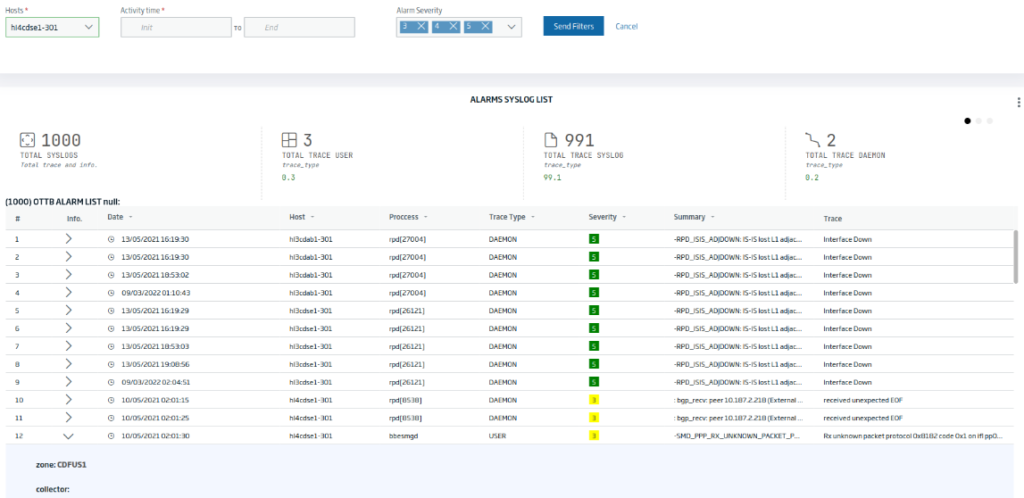

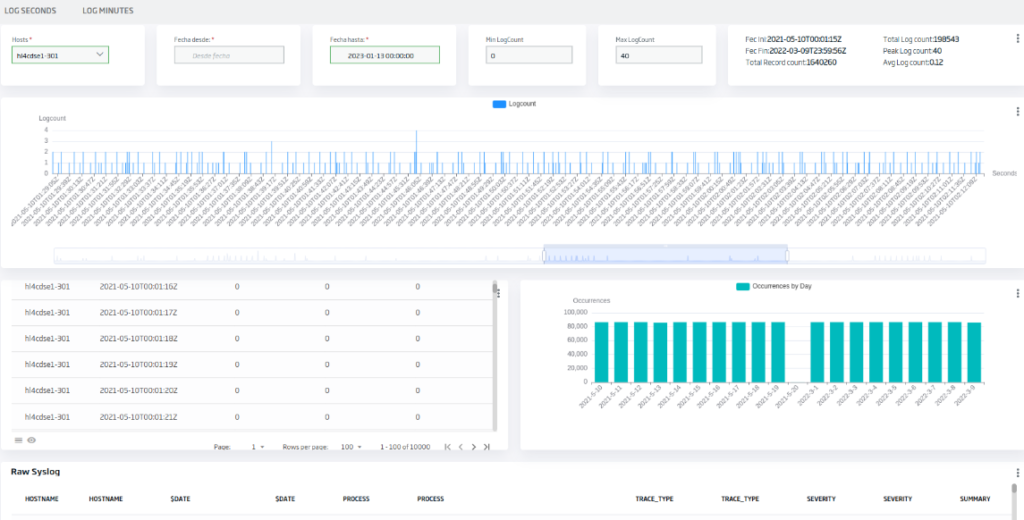

For instance, the log viewers would look like this:

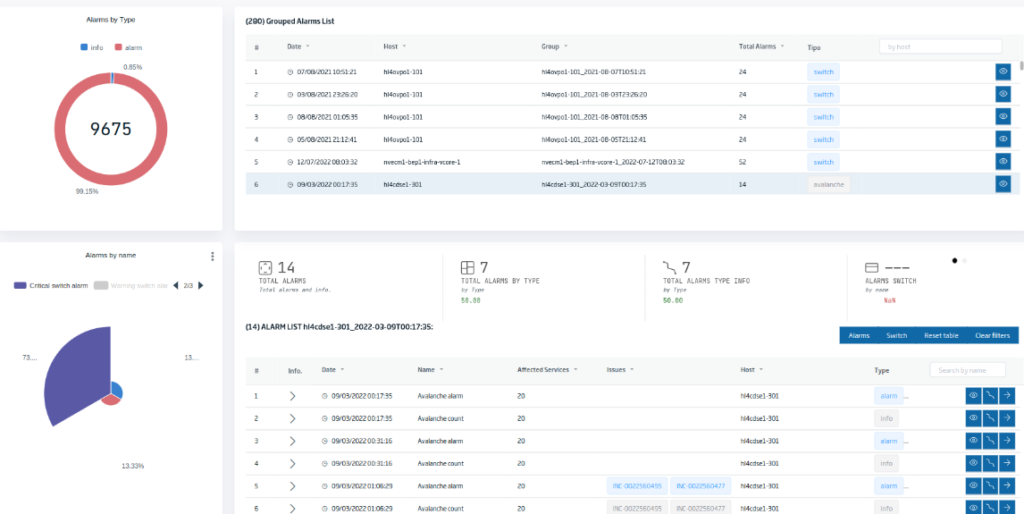

The alarm managers have the possibility of executing the correctors and they also have complete topological vision:

The Trace Analyzer would be displayed as follows:

Conclusions

As we have seen, generating an entire alarm management system (or other systems) is relatively easy thanks to the power of the different modules used in this Onesait Platform demonstrator.

We hope you found this interesting, and if you have any questions about it, do not hesitate to leave us a comment. Plus, if you are interested in us showing you this demonstration live, do not hesitate to contact us to make an appointment at our contact email contact@onesaitplatform.com.

Header image: Kari Shea at Unsplash