Deploying nginx to Kubernetes

nginx is a fairly lightweight, high-performance, open source web server/reverse proxy, which has grown over time as an HTTP cache, load balancer, and email proxy as well. Its potential lies in being able to work with a large number of connections at a high speed, something that makes it hold 20% of the web server market share.

Its operation is based on not creating new processes for each web request it receives, but instead using an asynchronous event-based approach, where requests are handled in a single thread.

As we can imagine, this is of great interest, so let’s see how we can deploy nginx through a Kubernetes container.

Choosing a Docker image

There are several options available, but the two most widespread are the following ones:

nginx

This Docker Hub image is the generic and main one. If we have an environment with Docker orchestration, this will be the best option to be able to work, since it is the easiest to use. On the other hand, if we use Kubernetes, this image can give us several problems, especially due to permissions working in Openshift environments.

In the latter case, no image can be run as root users, and by default the nginx image runs as root. Besides, its initial configuration file uses port 80, which will no longer be available since we need user permissions to use it.

bitnami/nginx

This image comes preconfigured to run on Kubernetes environments and, thanks to its default configuration, it is perfect for Openshift environments.

For this post, we will focus on this image.

bitnami/nginx configuration

We will start from the «bitnami/nginx:latest». Podemos ejecutarlo de manera sencilla con el siguiente comando:

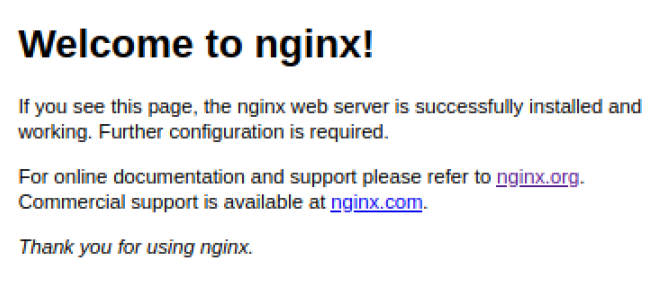

Docker run --name nginx -p 8080:8080 bitnami/nginx:latest By accessing the url «http://localhost:8080», we can see our nginx home page:

To create our configurations, we must create our «nginx.conf» file and mount it on top of our container. We have several options:

Modifying the default configuration (nginx.conf)

We can modify the default configuration of our nginx server by modifying the main file, «nginx.conf», located in «/opt/bitnami/nginx/conf/nginx.conf».

This file by default has a content similar to the following one:

# Based on https://www.nginx.com/resources/wiki/start/topics/examples/full/#nginx-conf

# user www www; ## Default: nobody

worker_processes auto;

error_log "/opt/bitnami/nginx/logs/error.log";

pid "/opt/bitnami/nginx/tmp/nginx.pid";

events {

worker_connections 1024;

}

http {

include mime.types;

default_type application/octet-stream;

log_format main '$remote_addr - $remote_user [$time_local] '

'"$request" $status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"';

access_log "/opt/bitnami/nginx/logs/access.log";

add_header X-Frame-Options SAMEORIGIN;

client_body_temp_path "/opt/bitnami/nginx/tmp/client_body" 1 2;

proxy_temp_path "/opt/bitnami/nginx/tmp/proxy" 1 2;

fastcgi_temp_path "/opt/bitnami/nginx/tmp/fastcgi" 1 2;

scgi_temp_path "/opt/bitnami/nginx/tmp/scgi" 1 2;

uwsgi_temp_path "/opt/bitnami/nginx/tmp/uwsgi" 1 2;

sendfile on;

tcp_nopush on;

tcp_nodelay off;

gzip on;

gzip_http_version 1.0;

gzip_comp_level 2;

gzip_proxied any;

gzip_types text/plain text/css application/javascript text/xml application/xml+rss;

keepalive_timeout 65;

ssl_protocols TLSv1 TLSv1.1 TLSv1.2 TLSv1.3;

ssl_ciphers HIGH:!aNULL:!MD5;

client_max_body_size 80M;

server_tokens off;

include "/opt/bitnami/nginx/conf/server_blocks/*.conf";

# HTTP Server

server {

# Port to listen on, can also be set in IP:PORT format

listen 8080;

include "/opt/bitnami/nginx/conf/bitnami/*.conf";

location /status {

stub_status on;

access_log off;

allow 127.0.0.1;

deny all;

}

}

} As we can see in the file, within the http, we have the «include» instruction for all the files located in the path «/opt/bitnami/nginx/conf/server_blocks/*.conf» and we can also include different locations automatically to the main server on port 8080, by adding the files on the path «/opt/bitnami/nginx/conf/bitnami/*.conf».

Adding location to the default server

Different locations can be added simply by adding files to the path «/opt/bitnami/nginx/conf/bitnami/*.conf». Here you have an example:

my_server.conf

location /my-endpoint {

proxy_pass http://my-service:8080;

proxy_set_header X-request_uri "$request_uri";

proxy_set_header Host $http_host;

proxy_read_timeout 120;

} This file would be placed in «/opt/bitnami/nginx/conf/bitnami/my_server.conf» and automatically, at startup, will redirect us to the selected service when accessing the url:

http://localhost:8080/my-endpoint Adding different servers (server_blocks)

So as to add different «server_blocks», if we keep the original configuration file «nginx.conf», we can create the different servers by creating different files in the path «/opt/bitnami/nginx/conf/server_blocks/*.conf». The following could be an example:

my_server_block.conf

server {

listen 8443;

server_name "dev-caregiver.cwbyminsait.com";

add_header Strict-Transport-Security "max-age=31536000" always;

location /my-endpoint {

proxy_pass http://my-service:8080;

proxy_set_header X-request_uri "$request_uri";

proxy_set_header Host $http_host;

proxy_read_timeout 120;

}

}This file will be placed in «/opt/bitnami/nginx/conf/server_blocks/my_server_block.conf» and automatically, at startup, will create a new port bound to this new server. Now we can access this new endpoint through the route:

http://localhost:8443/my-endpoint How to deploy bitnami/nginx in Kubernetes

First we will need to create a new deployment:

kind: Deployment

apiVersion: apps/v1

metadata:

name: nginx-balancer

labels:

app: nginx-balancer

app.kubernetes.io/name: nginx-balancer

app.kubernetes.io/instance: nginx-balancer

app.kubernetes.io/component: nginx-proxy

app.kubernetes.io/part-of: nginx-balancer

spec:

replicas: 1

selector:

matchLabels:

app: nginx-balancer

template:

metadata:

labels:

app: nginx-balancer

spec:

containers:

- resources:

limits:

cpu: 100m

memory: 250Mi

requests:

cpu: 50m

memory: 50Mi

readinessProbe:

tcpSocket:

port: 8080

initialDelaySeconds: 20

timeoutSeconds: 5

periodSeconds: 10

successThreshold: 2

failureThreshold: 3

livenessProbe:

httpGet:

path: /

port: 8080

scheme: HTTP

initialDelaySeconds: 20

timeoutSeconds: 5

periodSeconds: 10

successThreshold: 1

failureThreshold: 5

name: nginx-balancer

ports:

- name: 8443tcp84432

containerPort: 8443

protocol: TCP

- name: 8080tcp8080

containerPort: 8080

protocol: TCP

imagePullPolicy: Always

image: 'bitnami/nginx:latest'

restartPolicy: AlwaysNow that we have already created our original deployment, we can start adding our server blocks and the different locations that we want.

To create the server block, we must create a volume and link it to the path «/opt/bitnami/nginx/conf/server_blocks». To do this, first we will create a «Config Map» that we will later mount on the previous deployment.

kind: ConfigMap

apiVersion: v1

metadata:

name: server-block-map

data:

my_server_block.conf: |

########################### SERVER BLOCK ####################

server {

listen 8443;

add_header Strict-Transport-Security "max-age=31536000" always;

location /my-endpoint {

proxy_pass http://my-service:8080;

proxy_set_header X-request_uri "$request_uri";

proxy_set_header Host $http_host;

proxy_read_timeout 120;

}

}

other_server_block.conf: |

######### OTHER SERVER #######....Then we must make the following changes to our original deployment:

- Add our volume from the Config Map.

- Mount the volume on top of the servers directory.

...

spec:

...

template:

...

spec:

volumes:

- name: config-servers

configMap:

name: server-block-map

defaultMode: 484

containers:

...

volumeMounts:

- name: config-servers

mountPath: /opt/bitnami/nginx/conf/server_blocks This way, we will already have our nginx service configured. We can check it by accessing the nginx container and making a request to:

http://localhost:8443/my-endpoint Easy and simple, right? If you have any questions, leave us a comment.