Data Governance in Onesait Platform

Currently, companies are detecting too many problems associated with data and its management, resulting in different operational problems and also making it impossible to use data as a strategic asset.

These shortcomings and common problems associated with data management affect different projects in different dimensions:

- Unavailability: heterogeneous and dispersed information sources hinder obtaining information:

- Huge volumes of information cannot be processed with current technology.

- Lack of understanding between Business and Systems areas.

- Grandes volúmenes de información no tratables con la tecnología actual.

- Falta de entendimiento entre áreas de Negocio y Sistemas.

- Lack of credibility:

- Deficiencies in the quality of the information being handled.

- Information duplicity and incoherence.

- Need to make assumptions in the reconstruction of historical information.

- Very manual preparation of the information.

- No single view: difference in criteria between the information handled by the different business units:

- Information domains with restricted access.

- Shortage of cross-initiatives to add value.

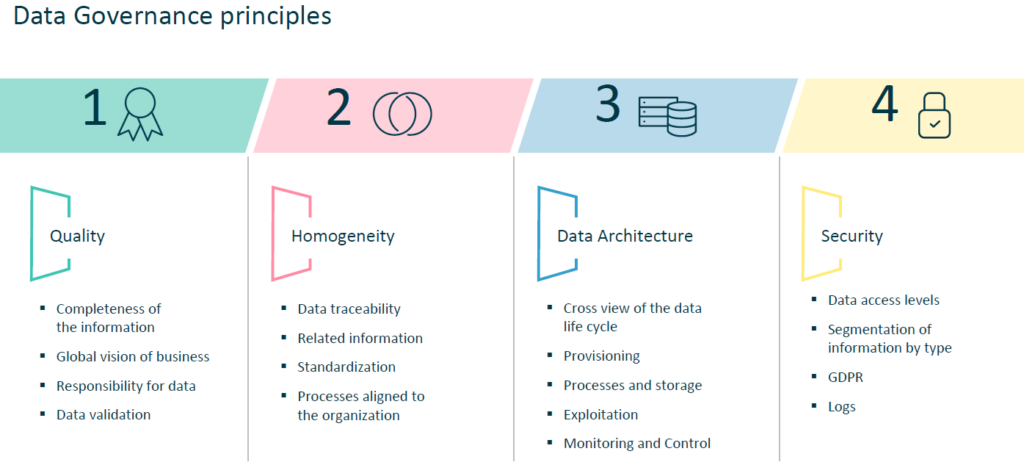

Principles of Data Governance

First of all, what is this “Data Governance” stuff?

It refers specifically to the management of the availability, integrity, usability and security of the data used in an organisation or system, in our case in the Onesait Platform.

This management is set apart because:

- Data quality is marked by the quality of the data at the time of its capture at the origin of the information. Therefore, it is essential to respect a series of principles to ensure it.

- To ensure a homogeneous and coherent understanding of the data, a data dictionary must be defined to keep the information and traceability updated.

- A coherent data architecture must be implemented, from the operation to the exploitation. It is important for the data to be validated, documented and that their traceability is accurately known.

- For optimal data exploitation, aggregation criteria must be defined, sufficient data granularity must be ensured and processes must be automated.

- To guarantee data security, appropriate information access profiles and options for data encryption, ciphering and anonymisation must be defined, granted and managed correctly.

- It is essential to define a number of policies and regulations to be respected in order to ensure the correct operation of the Data Governance Model.

Quality principles

Quality implies a number of premises:

- Information completeness: Assurance that the standardized data as a whole have logic for their exploitation.

- Global vision of business / infrastructure: Establishing the business definition for the data, allowing to identify them through an end-to-end vision, as well as with an infrastructure vision that allows to know the physical location of the data.

- Data responsibility: Assignment of responsibilities and roles on information management, data reliability, integrity, provisioning and exploitation, throughout a governance model that eases the monitoring of its evolution.

- Efficient data: Compliance with the principles of data non-duplication, integrity and consistency.

To ensure this quality, there are some key elements to be observed:

- Standards definition: Generation of minimum information requirements to consider the data as correct (length verification, data typology, formats).

- Data validation: Establishment of validation mechanisms that allow the integration of the data in the storage infrastructure according to the established standards, minimizing the number of incidents.

- Cross vision of the data life cycle: Identify the complete process of the data life cycle, allowing their global management throughout the traceability and mapping of information.

- Implementation of a methodology: Implement perfectly defined and established procedures that allow for the constant execution of best practices.

Homogeneity principles

It is necessary to control:

- Data traceability: data must be perfectly identifiable with respect to their traceability, mapping and follow-up throughout the processes; this allows to give continuity to the cohesion and coherence of information.

- Data must fit the business definition (data accuracy): only the data required according to the business need must be considered, avoiding duplicated information, increasing the accuracy and dimensioning of the bases.

- Related information: maintenance of data under logical rules and criteria that allow relationships to be established between the different repositories, facilitating aggregation based on the different typology.

- Standardization: standards must be defined for the data, from the processes of supply, storage and exploitation, establishing constant rules to assign names and clear methodologies to define procedures.

- Support in the infrastructure: use of the tools that facilitate the functional and technical traceability of each data: database administration, database design, creation and maintenance for the database system.

- Processes aligned to the organization/regulation: procedures must be fulfilled according to the vision of the entity, as well as the regulatory agents, limiting the margin of information.

Data architecture principles

A solid architecture must be based on key principles that support its structure. As we see it, we have to take into account:

- Robustness and flexibility: generating a scalable architecture that allows the agile incorporation of new structural parts and components.

- Unique sources: avoiding duplication in information storage, instead generating synergies and avoiding duplicate work through a detailed information analysis.

- An end-to-end vision: maintaining data traceability at all times to identify the information flow and transformation processes.

Therefore, the principles to be contemplated must be:

- Procurement:

- Alignment and standardization of input processes.

- Clear identification of sources/databases/files/applications, as well as the delimitation of the typology of information: channels, web, risks.

- Definition of input data validation processes.

- Processes and storage:

- Definition of the infrastructure and support technology with optimal performance and historical storage capacity.

- Generate centralized repositories aligned with corporate strategy, with validated information.

- Define calculation and data transformation processes according to business needs.

- Data homogeneity and cohesion.

- Exploitation:

- Identification of the exploitation / distribution tools.

- Definition of metrics and standards for reporting definition.

- Align means of delivery and areas in charge of them.

- Inventory control of outputs to avoid duplication in construction/development.

- Generate synergies based on existing developments.

- Monitoring and Control:

- Documentation: Carry out a correct follow-up of all processes, actions and decisions taken to generate the data architecture, as well as the data life cycle: supply, extraction, loading, exploitation.

- Aligned user areas: Alignment of user areas for accountability according to their participation in the process.

- Global vision: that allows the alignment of the life cycle with the corporate structure and procedures.

Principles of aggregation

The data architecture and technological infrastructure must support a definition that contains the criteria for grouping and dimensioning the data to achieve aggregation at the minimum level of detail. Therefore, we are considering:

- The accuracy and completeness of the information: For the data to be properly aggregated, the data must have a defined level of accuracy and completeness, in order to generate the risk calculation in an accurate and reliable way to meet the operational needs under normal and stress conditions.

- Automation: The aim is to eliminate manual work in the supply of information, implementing automatic processes that take the information and perform the calculation of the defined processes, allowing a correct deepening in the different levels of information.

- Analysis by information axis: A proper information aggregation requires analysis of the different cross-references that the data may have through any field with other similar ones, allowing to obtain a transversal vision of the information through its different axes and dimensions, in turn allowing to add and deepen to the minimum level of detail.

- Clear definition of the logical structure: The structure of the data model must be based on a structure defined under an exploitation vision at different levels and views, supported by the consistency and cohesion between the different modules or components.

Security principles

To maintain an appropriate level of safety, it is essential to consider:

- Data availability: keeping the data available for whoever needs to access it; presenting timely and in due form, whether for provisioning, users, applications or processes, meeting service levels.

- Information segmentation by type: defining criteria to include data in groups (critical, sensitive, etc.) that allow the assignment of criteria according to type, facilitating the management of profiles.

- The combination of technological and business vision: implementing specific physical and logical security rules for data protection in accordance with the level of risk established by the entity.

- Profile management: managing access according to standards allowed by user typology:

- User: with limited vision according to the defined profile.

- Administrator: access manager with global vision of the information.

- Logs: records that allow a global vision of the flow of information and of the people in charge of this treatment, whether it be data manipulation, extraction, access to databases, applications, files, etc.

Policy and regulations principles

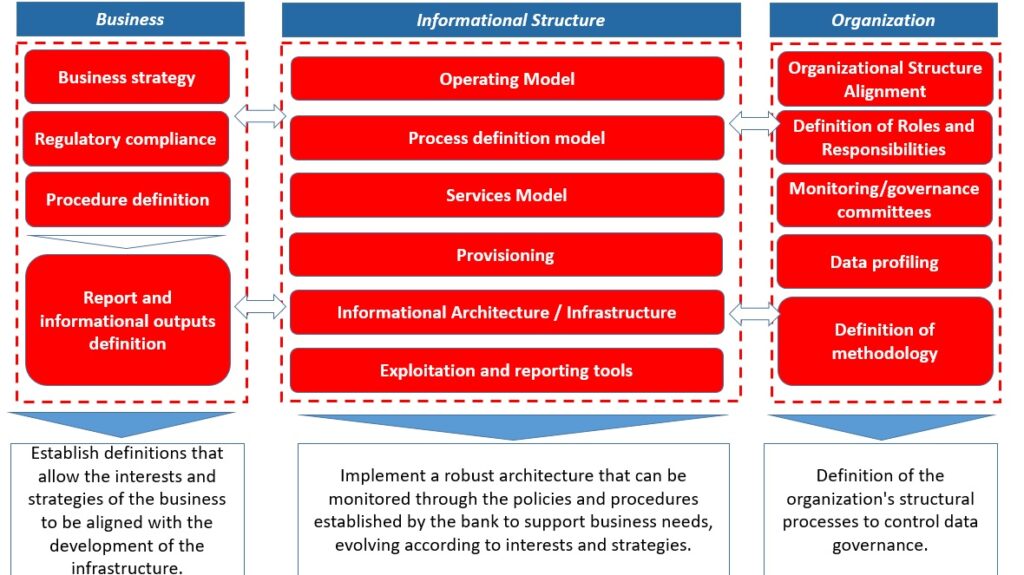

A relationship is defined between the business, the information structure and the organization:

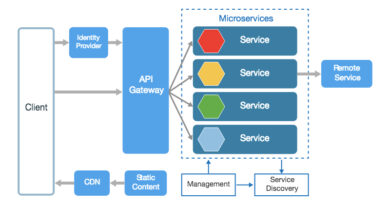

Data Governance Support in Onesait Platform

All of these principles, outlined for a correct data governance model, are supported in the Platform.

Would you like to know, in detailm, how we provide such support? Take a look at our Developer’s Portal, where you can find each principle described in detail and with examples.